Some things I learnt from working on big frontend codebases

On dev.to, you can find the latest version of the article with more contents.

Until now (May 2023), I had two experiences working on very big front-end (React+TypeScript) codebases: WorkWave RouteManager and the Hasura Console. Either of them are ~ 250K LOC, and the two experiences are very different. In this article, I report the most important problems I saw while working on them, things that usually are not big deals if working on smaller codebases, but that become a source of big friction when the app scales.

My direct experience

First of all, let me describe the main characteristics of the two projects:

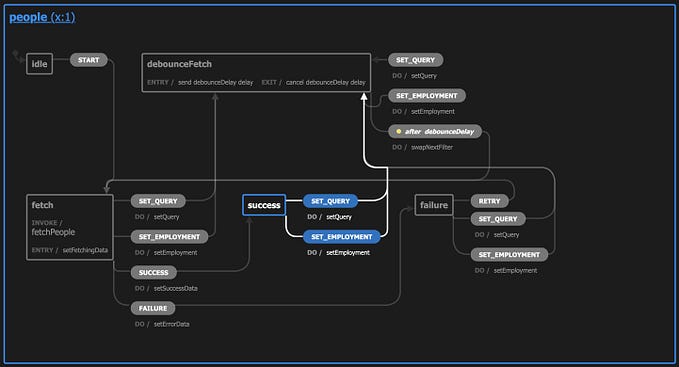

- WorkWave RouteManager: the product is very complex due to some back-end limitations that force the front-end to take care of a bigger complexity. Anyway, due to the strong presence of the great front-end Architect (that’s Matteo Ronchi, by the way), the codebase can be considered front-end perfection. The codebase is completely new (rewritten from scratch from 2020 to 2022), and trying and using new tools happens on a high cadence (for instance: we started using Recoil way sooner than the rest of the world, we migrated the codebase from Webpack to Vite in 2021, etc.), and the coding patterns are respected everywhere. Here I was the team leader of the front-end team.

- Hasura Console: the complexity of the project is not so high but the startup needs (pushing out new features as soon as possible) later resulted in some technical debt and antipatterns that are now big friction points for the developers working on them. Here, I joined as a senior front-end engineer and then I became the tech leader of the platform team.

Following is a non-exhaustive list of examples coming from some of the characteristics/activities/problems I saw, grouped by categories.

Generic approaches

Managing more cases than the needed ones

This innocent approach leads to big problems and a waste of time when you have to refactor a lot of code trying to maintain the existing features. Some examples are:

- Components/functions with optional props/parameters and fallback default values: when you need to refactor the components you need to understand what are the indirect consumers of the default values… But what happens if the usage of the default values is driven by network responses? You need to understand and simulate all the edge cases! And what happens if you find out that the default values are not used at all? I once saw a colleague of mine wasting four hours during a refactor for an unused default value…

- Types that are typed as a generic

stringor genericrecord<string, any>when in reality the possible values are known in advance. The result is a lot of code that manages generic strings and objects while managing the real finite amount of cases would be 10x easier. Again, when you need to refactor the code managing "generic" values, you are going to waste time.

I touched on these topics in my How I ease the next developer reading my code article.

Leaving dead code around

You refactor a module, you remove an import of an external module and you are fine. What happens if the module was the last consumer of the external one? The external module becomes dead code that will not be embedded in the application (nice) but that will confuse everyone that’s going around the codebase looking for solutions/utilities/patterns and will confuse the future refactorer that will blame anyone that left the unused module there!

And obviously, it’s a waterfall… the external module could import other unused modules and they could depend on an external NPM dependency that could be removed from the package.json, etc.

Internal code dependencies and boundaries

Not enforcing (through ESLint rules or through a proper monorepo structure) strong boundaries among product features/libraries/utilities bring unexpected breaks as a result of innocent changes. Something like FeatureA imports from internal modules of FeatureB that imports from internal modules FeatureA and FeatureC, etc. brings you to break 50% of the product by changing a simple prop in a FeatureA’s component. And if you have a lot of JavaScript modules never converted to TypeScript, you will also have a hard time understanding the dependency tree among features…

I strongly suggest reading React project structure for scale: decomposition, layers and hierarchy.

Implicit dependencies

They are the hardest things to deal with. Some examples?

- Global styles that impact your UI’s look&feel in unexpected ways

- A global listener on some HTML attributes that do things without the developer knowing about them

- A generic MSW mock server that all the tests used but it’s impossible to know what handlers are used by what tests

Again, poor the refactorer that will deal with those. Instead, explicit imports, speaking HTML attributes, inversion of control, etc. allow you to easily recognize who consumes what.

Big modules

This is another very subjective topic: I prefer to have a lot of small and single-function modules compared to long ones. I know that a lot of people prefer the opposite so it’s mostly a matter of respecting what is important for the team.

Code readability

I’m a fan of the The Art of Readable Code book and after spending 2.5 years working on a big and complex codebase with zero (!!!) tests, I can tell how much code readability is important.

This also really depends on the number of developers working on a codebase, but I think it’s worth investing in some shared coding patterns that must be enforced in PRs (or even better if they can be automated through Prettier or similar tools).

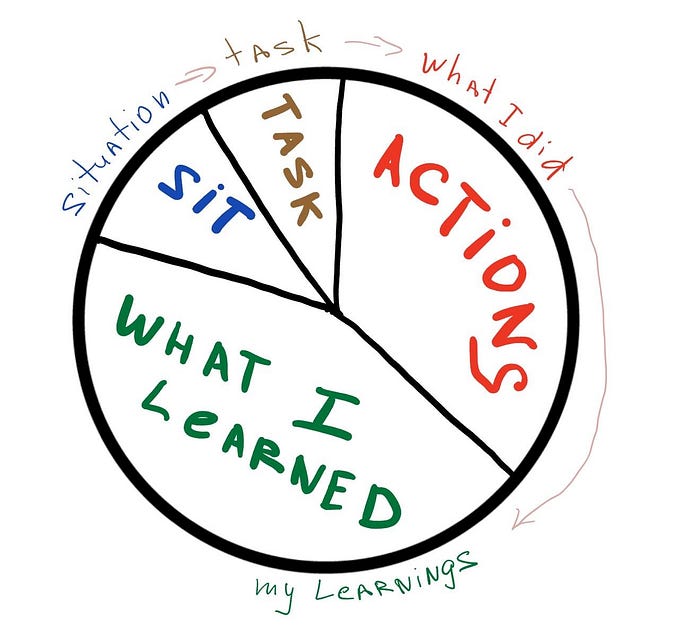

I publicly shared the ones we were using in WorkWave in this 7-article series: RouteManager UI coding patterns. The internal rule we had was that “patterns must be recognizable in the code, but not authors”.

No silver bullets here, the important thing IMO is that readability and refactorability are kept in mind by everyone when writing code.

Uniformity is better than perfection

If you are about to refactor a module but you do not have time to refactor also the two modules that are coupled to it… Consider not refactoring it to leave the three modules uniform among them (uniformity means predictability and less ambiguity).

Working flow

No PR description and big PRs

That’s such an important topic that I wrote four articles about it. Start with the most important one: Support the Reviewers with detailed Pull Request descriptions

And if you are curious you can dig into some real-life examples I documented here

- A Case History: Analysing Hasura Console’s code review process

- https://dev.to/noriste/re-building-a-branch-and-telling-a-story-to-ease-the-code-review-485o

- Improving Hasura’s Internal PR Review process

Suggesting big changes and approaches during code reviews

PRs are not the best place to suggest big changes or completely change the approach because you are indirectly blocking releasing a feature or a fix. Sometimes is crucial to do it, but maybe the initial analysis and estimation steps, pair programming sessions etc. works better to help shape the approach and the code.

When to fix the technical debt?

That’s a great question, no silver bullet here… I could only share my experience until now

- In WorkWave we were used to dealing with technical debt on a daily basis. Fixing tech debt is part of the everyday engineers’ job. This can slow down the feature development in favor of having a deep knowledge of the context and keeping the codebase in good shape. It’s like knowing that you are slowing down today’s development to keep tomorrow’s development at the current pace.

- In Hasura, we cannot deal with technical debt due to the need to deliver new features. This transformed in a lot of frontenders going slower compared to their potential, sometimes introducing bugs, and offering an imperfect UX to the customers. It happened after years, obviously.

You can read more about a good example of Hasura’s problems in my Frontend Platform use case — Enabling features and hiding the distribution problems article. Also, you could read what happened to our E2E tests here after all the tech debt problems we were facing.

No front-end oriented back-end APIs

By “no front-end oriented” I mean APIs not designed with the end customers’ UX in mind and a lot of complexity pushed to the front-end in order to keep the back-end development lean (ex. Embedding a lot of DB queries in the front-end avoiding exposing a new API from the back-end). This approach is natural during the initial evolution of a product but will lead to more and more complex front-ends when the product needs to scale.

Never updating the NPM dependencies

Again, based on my own experiences:

- In WorkWave I was used to updating the external dependencies on a weekly basis. Usually, it takes me 30 minutes, sometimes 4 hours.

- In Hasura, we were used not to updating them, finding ourselves, enabling

legacy-peer-depsby default, leveraging NPM'soverridesand being unable to update any GraphQL-related dependency. Other than having a lot of PRs that completely break the build because of a new dependency.

And since maintaining dependencies has a cost, you should carefully consider if you really need an eternal dependency or not. Is it maintained? Does it solve a complex problem I prefer to delegate to an external part?

TypeScript

Bad practice: Generic TypeScript types and optional properties

It is very common to find types like this

type Order = {

status: string

name: string

description?: string

at?: Location

expectedDelivery?: Date

deliveredOn?: Date

}that should be represented with a discriminated union like this

type Order = {

name: string

description?: string

at: Location

} & ({

status: 'ready'

} | {

status: 'inProgress'

expectedDelivery: Date

} | {

status: 'complete'

expectedDelivery: Date

deliveredOn: Date

})that is more verbose but acts as pure domain documentation, removes tons of ambiguity, and allows writing better and clearer code.

The topic is so important and has so many great advantages that I wrote a dedicated article to the topic: How I ease the next developer reading my code.

Type assertions (as)

Type assertions are a way to tell TypeScript “shut up, I know what I’m doing” but the reality is that barely you know what you are doing, especially thinking about the consequences of what you are doing…

This happens very frequently in tests, where big objects are “typed” with type assertions… Resulting in the object going outdated compared to the original type… But you realize it only when the tests will fail and you now left room for a lot of future doubts about the test failures…

The solution: type everything correctly and eventually prefer @ts-expect-error with an explanation of the error you expect.

Read Why You Should Avoid Type Assertions in TypeScript to know more about the topic (and keep in mind that also the JSON.parse example shown there can be typed by using Zod parsers).

@ts-ignore instead of @ts-expect-error and broad scope

@ts-expect-error issues could be auto-fixable in the future, compared to @ts-ignore (that's another way to shut up TypeScript).

More, @ts-expect-error should be applied to the smallest possible scope to TS accepting unintended errors.

// ❌ don't

// @ts-expect-error TS 4.5.2 does not infer correctly the type of typedChildren.

return React.cloneElement(typedChildren, htmlAttributes); // <-- the whole line is impacted by @ts-expect-error

// ✅ do

return React.cloneElement(

// @ts-expect-error TS 4.5.2 does not infer correctly the type of typedChildren.

typedChildren, // <-- only typedChildren is impacted by @ts-expect-error

htmlAttributes

);any instead of unknown

TypeScript’s any gives you freedom (that's generally bad) of doing everything you want with a variable, while unknown forces you to strictly guarantee runtime the runtime value before consuming it. any is like turning off TypeScript while unknown is like turning on all the possible TypeScript alerts.

ESLint rules kept as warnings

ESLint warnings are useless, they only add a lot of background noise and they are completely ignored. Rules should be on or off, but never warnings.

Validating the external data

In the software world, the rule of “never trust what the frontend sends to the backend” is crucial, but I’d say that in a front-end application armed with TypeScript types, you should not trust any kind of external data. Server responses, query strings, local storage, JSON.parse, etc. are potential sources of runtime problems if not validated through type guards (read my Keeping TypeScript Type Guards safe and up to date article) or, even better, Zod parsers.

React

HTML templating instead of clear JSX

JSX which includes a lot of conditions, loops, ternaries, etc. are hard to read and sometimes unpredictable. I call it “HTML templating”. Instead, smaller components with a clear separation of concerns among the components are a better way to write clear and predictable JSX.

Again, I touched on this topic in my How I ease the next developer reading my code article.

Lot of React hooks and logic in the component’s code

I’m a great fan of hiding the React component’s logic into custom hooks whose name clearly indicates the scope of the hook and the consuming it inside it. The reason is always the same: long code before the JSX makes reading the JSX harder.

Tests

Bad tests

As a test lover and instructor (I teach about front-end testing at private companies and conferences) I can say that bad tests are the result of lacking experience on this topic, and the only solution is help, mentoring, help, mentoring, help, mentoring, etc.

Anyway, the false confidence that tests can offer is a big problem in every codebase.

I suggest reading two of my articles:

- From unreadable React Component Tests to simple, stupid ones

- Improving UI tests’ code with debugging in mind

E2E tests everywhere

E2E tests do not scale well because of the need for real data, a real back-end, etc.

Also, in this case, I suggest reading some of my articles:

- Decouple the back-end and front-end test through Contract Testing

- Improving UI tests’ code with debugging in mind

- Front-end productivity boost: Cypress as your main development browser

Developer Experience

Deprecated APIs

When the code is marked as @deprecated, the IDE shows it as strikethrough'ed and presents the documentation, helping the developers realize that they should not use it.

An example:

/**

* @deprecated Please use the new toast API /new-components/Toasts/hasuraToast.tsx

*/

export const showNotification = () => { /* ... */ }Care about the browser logs

Console warnings (coming from ESLint, TypeScript, React, Storybook, etc.) add a lot of background noise that mixes with the important logs you could trace. Care and remove them in order to avoid the developers ignoring your own important alerts due to the high noise.

Developer alerts for unexpected things

Runtime things (ex. server responses) could not be aligned with the front-end types. If you do not want to break the user flow by throwing an error, at least track the error through something that could alert you about it (like Sentry, or whatever other tool), so a short time will pass between the error coming out and you fixing it.

React-only APIs

If you are creating an internal library, prefer to expose only React APIs. The big advantage is that you count on React’s reactivity system, and managing dynamic/reactive cases in the future will be easier because you are sure the consumers of your React APIs are re-rendered for free and always deal with fresh data.

Credit where credit is due

Thank you so much to M. Ronchi and N. Beaussart for teaching me so many important things in the last few years ❤️ a lot of content included in this article comes from working with them on a daily basis ❤️

Hi! 👋 I’m Stefano Magni, and I’m a passionate Front-end Engineer, a speaker, and an instructor. I work remotely as a Senior Front-end Engineer for Preply.

I love creating high-quality products, testing and automating everything, learning and sharing my knowledge, helping people, speaking at conferences, and facing new challenges.

You can find me on Twitter, GitHub, LinkedIn. You can find all my recent contributions/talks etc. on my GitHub summary.